Mar 17, 2026

From virtio-snd 0-Day to Hypervisor Escape: Exploiting QEMU with an Uncontrolled Heap Overflow

Turning an uncontrolled heap overflow into a reliable QEMU guest-to-host escape using new glibc allocator behavior and QEMU-specific heap spray techniques.

Heap overflows are often exploitable, but far less so when the corrupted bytes are not under your control. In many cases, that kind of bug is written off as a crash and nothing more. However, in this post we show how we turned such an overflow into a reliable QEMU guest-to-host escape by abusing new glibc allocator behavior and QEMU-specific heap spray techniques.

QEMU

QEMU is a machine emulator and virtualizer that lets a host system run guest operating systems. It presents the guest with virtual hardware, while the logic backing that hardware runs inside the host-side QEMU process.

Virtio Devices

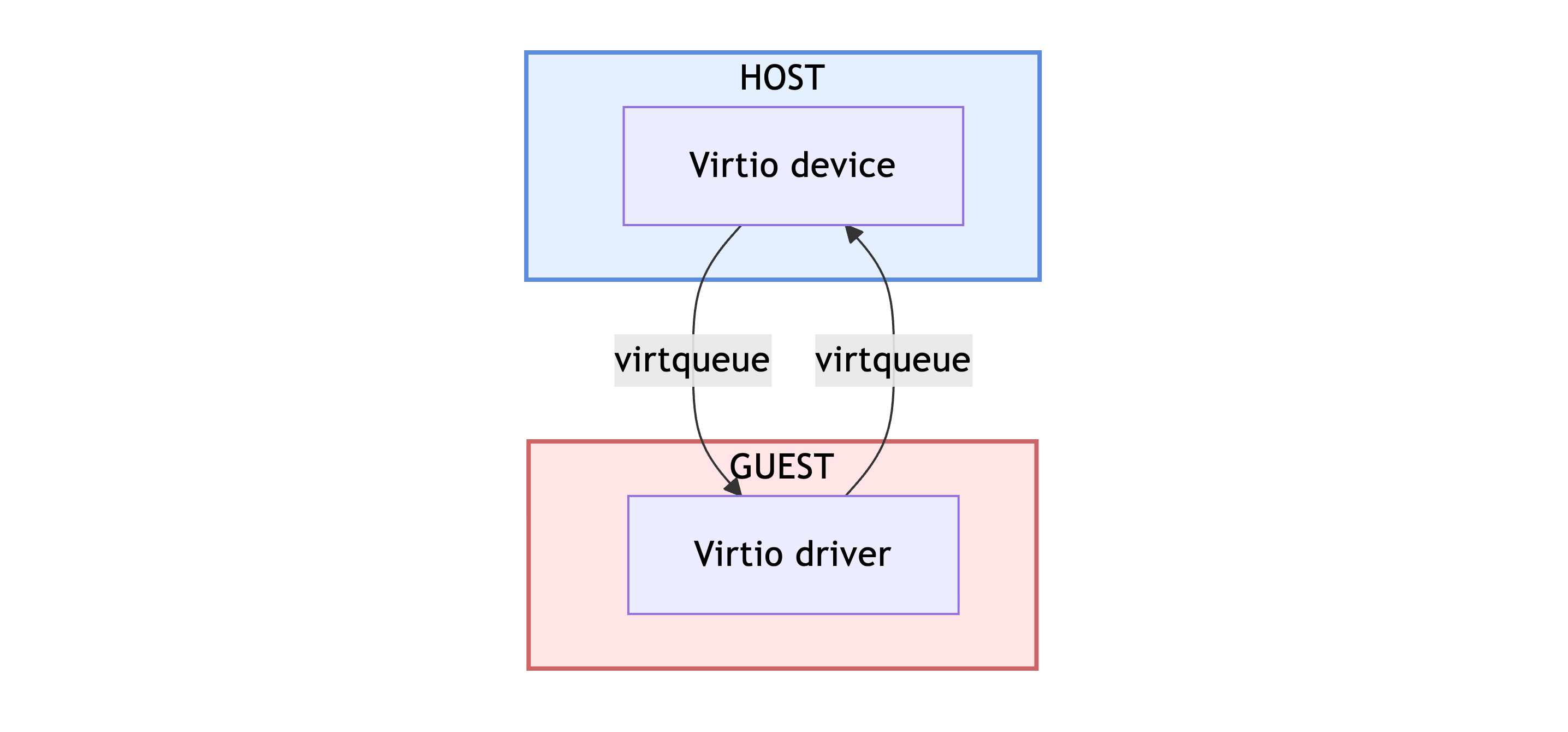

For guest-to-host escape research, the interesting part of QEMU is the interface between the guest and those host-side device implementations. Every request sent by the guest is eventually parsed and handled by code running in the QEMU process. This is interesting because any unhandled edge case in the device could lead to some kind of host state corruption.

At a high level, the communication between the driver running in the guest and the device running on the host is simple - the guest-side virtio driver shares requests over virtqueues, while the host-side virtio device consumes those requests, processes and returns responses.

Finding a Bug

While looking for devices to research, we focused on ones that seemed to have received less scrutiny in the past. With that in mind, we started with the sound device virtio-snd.

virtio-snd

From the official documentation:

Virtio sound implements capture and playback from inside a guest using the configured audio backend of the host machine.

Essentially, it allows software running inside the guest to interact with the host's audio stack through a paravirtualized sound device. Playback streams send guest-provided audio data to the host backend, while capture streams let the guest receive audio input from the host.

Audio Data Buffers

This audio data flows through buffers allocated by the host-side virtio-snd device and stored in a FIFO linked list for the corresponding stream.

For example, the following is virtio_snd_handle_rx_xfer, which is responsible for allocating buffers for an input audio stream:

/*

* The rx virtqueue handler. Makes the buffers available to their

* respective streams for consumption.

*

* @vdev: VirtIOSound device

* @vq: rx virtqueue

*/

static void virtio_snd_handle_rx_xfer(VirtIODevice *vdev, VirtQueue *vq)

{

VirtQueueElement *elem;

[...]

for (;;) {

VirtIOSoundPCMStream *stream;

elem = virtqueue_pop(vq, sizeof(VirtQueueElement)); // [1]

if (!elem) {

break;

}

[...]

WITH_QEMU_LOCK_GUARD(&stream->queue_mutex) {

size = iov_size(elem->in_sg, elem->in_num) -

sizeof(virtio_snd_pcm_status); // [2]

buffer = g_malloc0(sizeof(VirtIOSoundPCMBuffer) + size);

buffer->elem = elem;

buffer->vq = vq;

buffer->size = 0;

buffer->offset = 0;

QSIMPLEQ_INSERT_TAIL(&stream->queue, buffer, entry); // [3]

}

continue;

[...]

}

At [1], a VirtQueueElement *elem is popped from the virtqueue. It contains the in_sg and out_sg iovecs that describe the guest request, and is therefore fully guest-controlled.

Further at [2], the device computes the size of the data buffer as iov_size(elem->in_sg, elem->in_num) - sizeof(virtio_snd_pcm_status). That value is then used in the allocation: g_malloc0(sizeof(VirtIOSoundPCMBuffer) + size). Finally, at [3], the newly allocated buffer is appended to the stream->queue linked list.

Because both the in_sg iovec and the in_num field are guest-controlled, and there is no check that the total in_sg size is at least sizeof(virtio_snd_pcm_status), this calculation can underflow if the guest provides a smaller input buffer - that gives us our first bug.

From the guest driver, we can provide an empty in_sg iovec. In that case, the calculation becomes 0 - sizeof(virtio_snd_pcm_status), so the allocation size effectively becomes sizeof(VirtIOSoundPCMBuffer) - 8. Given the definition of VirtIOSoundPCMBuffer:

struct VirtIOSoundPCMBuffer {

QSIMPLEQ_ENTRY(VirtIOSoundPCMBuffer) entry;

VirtQueueElement *elem;

VirtQueue *vq;

size_t size;

uint64_t offset;

/* Used for the TX queue for lazy I/O copy from `elem` */

bool populated;

uint8_t data[];

};

That under-allocation removes the populated field along with the variable-sized data array. As the comment says, populated is only relevant to the TX path and is not used for audio input. However, by making the iovec size 1, the device believes data should be 1 byte, while the actual allocation is sizeof(VirtIOSoundPCMBuffer) - 7.

Populating Data Buffers

Let's take a look at how the allocated data buffer for the input stream is filled:

/*

* AUD_* input callback.

*

* @data: VirtIOSoundPCMStream stream

* @available: number of bytes that can be read with AUD_read()

*/

static void virtio_snd_pcm_in_cb(void *data, int available)

{

VirtIOSoundPCMStream *stream = data;

VirtIOSoundPCMBuffer *buffer;

size_t size, max_size;

WITH_QEMU_LOCK_GUARD(&stream->queue_mutex) {

while (!QSIMPLEQ_EMPTY(&stream->queue)) {

buffer = QSIMPLEQ_FIRST(&stream->queue);

[...]

max_size = iov_size( // [1]

buffer->elem->in_sg,

buffer->elem->in_num

);

for (;;) {

if (buffer->size >= max_size) { // [2]

return_rx_buffer(stream, buffer);

break;

}

size = AUD_read(stream->voice.in,

buffer->data + buffer->size,

MIN(available, (stream->params.period_bytes - // [3]

buffer->size)));

if (!size) {

available = 0;

break;

}

buffer->size += size;

available -= size;

[...]

}

}

}

}

At [1], max_size is set to iov_size(in_sg, in_num). Both in_sg and in_num are the same guest-controlled fields from virtio_snd_handle_rx_xfer.

Later, at [2], the code checks whether buffer->size >= max_size. In the RX path, buffer->size tracks how many bytes have been written into buffer->data, not the size of the allocation itself. This check is therefore intended to stop reading once the buffer is full.

However, this does not match the allocation logic in virtio_snd_handle_rx_xfer, which used: size = iov_size(elem->in_sg, elem->in_num) - sizeof(virtio_snd_pcm_status);. In other words, the allocation subtracts sizeof(virtio_snd_pcm_status), but the later bound in virtio_snd_pcm_in_cb does not. That mismatch gives us a second bug: an 8-byte OOB write.

Finally, at [3], the code calls AUD_read with the following limit:

MIN(available, stream->params.period_bytes - buffer->size). Notice how this bound does not take max_size into account at all. That means if available is larger than the allocated buffer, and stream->params.period_bytes is also larger than the allocated buffer, AUD_read will write past the end of buffer->data - the third, and final, bug we found.

Looking further at the code, we can see that stream->params.period_bytes is fully guest-controlled by issuing a VIRTIO_SND_R_PCM_SET_PARAMS request:

static

uint32_t virtio_snd_set_pcm_params(VirtIOSound *s,

uint32_t stream_id,

virtio_snd_pcm_set_params *params)

{

virtio_snd_pcm_set_params *st_params;

[...]

st_params = virtio_snd_pcm_get_params(s, stream_id);

[...]

st_params->buffer_bytes = le32_to_cpu(params->buffer_bytes);

st_params->period_bytes = le32_to_cpu(params->period_bytes);

st_params->features = le32_to_cpu(params->features);

/* the following are uint8_t, so there's no need to bswap the values. */

st_params->channels = params->channels;

st_params->format = params->format;

st_params->rate = params->rate;

return cpu_to_le32(VIRTIO_SND_S_OK);

}

Among the guest-controlled PCM parameters, format matters later for exploit reliability. For 8-bit PCM, QEMU accepts both unsigned (u8) and signed (s8) samples. They encode the same waveform differently - silence is 0x80 in u8, but 0x00 in s8.

To summarize:

- an integer underflow in the

sizecalculation invirtio_snd_handle_rx_xfer, resulting in an 8-byte (or less) under-allocation - a mismatch in the

max_sizecalculation invirtio_snd_pcm_in_cb, leading to at most 8-byte OOB write - a missing bound in the

sizepassed toAUD_read, which does not take the actual buffer allocation size into account and can therefore lead to an OOB write of an arbitrary length, up toavailablebytes

In our exploit, we focus on the third bug because it provides the largest overflow and therefore the most useful primitive. In practice, the actual write is still bounded by available, but in our setup with the ALSA backend, available was consistently around 4096.

It is also worth noting that the timing here was particularly unlucky - these bugs had been present in QEMU for over two years, but they were fixed (commit 1, commit 2) in the very same week that we independently found them while manually reviewing the code.

Exploit

Each of these bugs is in the audio input path. Since that audio input comes from the host side, the bytes written out of bounds are not controlled by the guest and, from the exploit perspective, can be treated as effectively random.

This gives an interesting challenge: how do you exploit an out-of-bounds write when you do not control the data being written?

Achieving a Better Primitive

The first idea that comes to mind is to target some kind of size or offset field. The goal is to make that field as small as possible initially, trigger the overflow, and rely on the corrupted bytes being larger than the original value. Such scenario would transform a weak primitive into a much more useful one, giving us a better starting point for the rest of the exploit.

However, after searching QEMU for such objects we didn't find a suitable target. The main problem was that, in most cases, the field we wanted to corrupt was preceded by one or more pointers. That would have been acceptable if those pointers were unused, but in every candidate object we examined they were still live. As a result, the heap overflow would corrupt them with effectively random bytes, causing an invalid dereference and crashing QEMU before we could achieve our desired guest-to-host escape.

At that point, we turned our attention to the glibc allocator. This is usually not the first choice in such targets - allocator techniques are often more version-specific and less portable than program-specific primitives (for example, type confusion on known object layouts). So allocator attacks are often a fallback once object-level paths are exhausted.

Glibc Allocator

The glibc allocator has already been studied and documented extensively, so we will only cover the basics relevant to this exploit. A good resource for both current and older attack techniques is how2heap.

Chunk Layout and Bins

A chunk looks like this:

+0x0 +0x8

+-------------+-------------+

| prev_size | size |

+---------------------------+

+0x10 | 41 41 41 41 41 41 41 41 |

| |

| 41 41 41 41 41 41 41 41 |

| |

| . . . |

The first 16 bytes form the chunk header. It consists of the prev_size field at offset 0x0 and the size field at offset 0x8. As the name suggests, prev_size stores the size of the previous chunk and is only used when that chunk is free, while size stores the size of the current chunk and three special bits of which PREV_INUSE and IS_MMAPPED are relevant for this blog post. The actual chunk data begins at offset 0x10.

Freed chunks are organized into different bins depending on their size and state. For this writeup, the important one is the per-thread cache, or tcache. Tcache stores recently freed chunks in size-segregated singly linked lists and is generally the first place glibc looks when servicing small allocations.

free() path

Let’s first look at the free() path in glibc 2.40:

__libc_free (void *mem)

{

mstate ar_ptr;

mchunkptr p;

p = mem2chunk (mem);

if (chunk_is_mmapped (p))

{

munmap_chunk (p);

}

else

{

MAYBE_INIT_TCACHE ();

ar_ptr = arena_for_chunk (p);

_int_free (ar_ptr, p, 0);

}

}

We can see that if the IS_MMAPPED bit is set in the corrupted size field, glibc will call munmap_chunk, which internally checks that prev_size + size is page-aligned. To reach the size field, we first have to overwrite the entire 8-byte prev_size field with uncontrolled data. The chance that a corrupted prev_size + size value still ends up page-aligned is extremely small. In practice, if IS_MMAPPED is set, the process will almost certainly abort before we can make use of the corruption.

Assuming IS_MMAPPED is not set, execution continues into _int_free:

static void

_int_free (mstate av, mchunkptr p, int have_lock)

{

INTERNAL_SIZE_T size;

size = chunksize (p);

/* Little security check which won't hurt performance: the

allocator never wraps around at the end of the address space.

Therefore we can exclude some size values which might appear

here by accident or by "design" from some intruder. */

if (__builtin_expect ((uintptr_t) p > (uintptr_t) -size, 0)

|| __builtin_expect (misaligned_chunk (p), 0))

malloc_printerr ("free(): invalid pointer");

/* We know that each chunk is at least MINSIZE bytes in size or a

multiple of MALLOC_ALIGNMENT. */

if (__glibc_unlikely (size < MINSIZE || !aligned_OK (size)))

malloc_printerr ("free(): invalid size");

check_inuse_chunk(av, p);

[...]

The first check verifies that the chunk pointer itself is not misaligned. Since we do not control the pointer, this is not particularly relevant here.

The next check, however, ensures that the size field is 16-byte aligned. This means that the low byte we overwrite in size must preserve alignment while also avoiding the IS_MMAPPED bit. Under those constraints, exploiting the bug through size corruption looked very unreliable at first.

Still, we wanted to check how this behaved in the latest glibc 2.43:

void

__libc_free (void *mem)

{

mchunkptr p;

p = mem2chunk (mem);

INTERNAL_SIZE_T size = chunksize (p);

if (__glibc_unlikely (misaligned_chunk (p)))

return malloc_printerr_tail ("free(): invalid pointer");

#if USE_TCACHE

if (__glibc_likely (size < mp_.tcache_max_bytes))

{

[...]

return tcache_put (p, tc_idx);

}

It is easy to notice that, when taking the tcache path, there are essentially no integrity checks on the size field beyond the basic size-range decision needed to determine whether the chunk fits into tcache. The only explicit check here is that the pointer itself is aligned, which is not something we care about.

In fact, even the version prior to 2.43 still performed more validation on the tcache path by calling check_inuse_chunk ([1]):

void

__libc_free (void *mem)

{

mchunkptr p;

p = mem2chunk (mem);

INTERNAL_SIZE_T size = chunksize (p);

if (__glibc_unlikely (misaligned_chunk (p)))

return malloc_printerr_tail ("free(): invalid pointer");

check_inuse_chunk (arena_for_chunk (p), p); // [1]

#if USE_TCACHE

if (__glibc_likely (size < mp_.tcache_max_bytes && tcache != NULL))

[...]

This means that as long as we can reliably force the corrupted chunk down the tcache path, we no longer need to worry much about integrity checks on size, because on the latest 2.43 glibc they are non-existent.

With that in mind, the idea we settled on was to allocate a chunk whose size field was initially 0x200, then trigger the overflow and corrupt only its low byte. If the byte written is at least 0x10, the resulting value would correspond to a larger, tcache-eligible, size in range [0x210, 0x2f0]. That would let us free the chunk as an oversized entry into the tcache freelist, which we could later reclaim and overlap chunks for a better primitive.

This approach gives us much better odds of success. In fact, with the stream configuration we use later, we can make this behavior reliable enough to exploit consistently.

Heap Spraying

With that idea in mind, we now need a way to shape the heap so that a 0x200-sized chunk is placed immediately after the vulnerable virtio-snd buffer. In addition, we need to drain any existing entries from the relevant tcache freelist so that it is not full when we later free the corrupted oversized chunk.

Unfortunately, while virtio-snd does provide some heap spraying primitives through its buffer allocations, they are fairly limited. For example, we could only allocate up to 64 buffers at a time. On top of that, stream->queue is a FIFO queue, so we could not control the order in which those buffers were freed - they would always be released in the same order they were inserted.

For the purposes of this blog post, we therefore enabled another virtio device to help with heap shaping.

virtio-9p

virtio-9p is a paravirtualized filesystem device that lets the guest access a directory exported by the host through the 9P protocol. The part that interested us most was its handling of extended attributes, or xattrs.

Through a P9_TXATTRCREATE request, we can allocate host-side buffers for both the .name and .value fields, with the size of .value being directly controlled by the guest.

static void coroutine_fn v9fs_xattrcreate(void *opaque)

{

int flags, rflags = 0;

int32_t fid;

uint64_t size;

ssize_t err = 0;

V9fsString name;

size_t offset = 7;

V9fsFidState *file_fidp;

V9fsFidState *xattr_fidp;

V9fsPDU *pdu = opaque;

v9fs_string_init(&name);

err = pdu_unmarshal(pdu, offset, "dsqd", &fid, &name, &size, &flags);

if (err < 0) {

goto out_nofid;

}

[...]

if (size > P9_XATTR_SIZE_MAX) {

err = -E2BIG;

goto out_nofid;

}

[...]

v9fs_string_init(&xattr_fidp->fs.xattr.name);

v9fs_string_copy(&xattr_fidp->fs.xattr.name, &name);

xattr_fidp->fs.xattr.value = g_malloc0(size);

}

Because the .name field is handled as a string, embedded null bytes are not preserved, which makes it less useful for our purposes. It also introduces some extra allocation noise into the heap, since creating an xattr allocates both .name and .value, not just the .value we actually care about. But we will get around this later in the blog post.

The .value field, however, is much more interesting: it gives us a guest-controlled heap allocation of an arbitrary size. Each of these allocations is tied to its own xattr FID, which means it stays alive for as long as that FID remains live. In practice, this gives us a large number of persistent host-side heap objects that we can manage individually.

Once allocated, we can write arbitrary bytes into the .value buffer through a P9_TWRITE request on the corresponding xattr FID. We can also read the contents back with P9_TREAD, which is useful later when turning overlap into stronger primitives. Finally, we can free any individual allocation at any time by issuing a P9_TCLUNK request on that same FID.

This gives us a very strong heap shaping primitive in QEMU - allocate on demand, choose the size precisely (up to 65536 bytes, which is more than enough here), fully control the contents of the allocation, keep it alive as long as needed, and free it selectively later.

Setting the Heap Layout

Ideally, we want a contiguous heap region consisting only of .value allocations, like this:

0x200 0x200 0x200 0x200 0x200

+----------+----------+----------+----------+----------+

| | | | | |

| .value A | .value B | .value C | .value D | .value E |

| | | | | |

+----------+----------+----------+----------+----------+

This lets us later create holes by freeing every other .value allocation. Those freed chunks enter the freelist, allowing the overflowing virtio-snd buffer to be allocated into one of those holes and overflow into the size field of the next live .value chunk.

Of course, we do not know the initial state of the heap. In practice, it is fragmented and already contains many freelist entries. Fortunately, this is not a problem for glibc, since the allocator is deterministic. By allocating enough chunks of the size we want, malloc will first consume any suitable entries already present in the freelist. Once those are exhausted, subsequent allocations will be served from the top chunk in a contiguous fashion, giving us the continuous region we need.

As mentioned earlier, v9fs_xattrcreate always allocates two chunks: one for .name and one for .value. We want to avoid having .name chunks inside our main contiguous region. There are two ways to approach this:

- Make

.namelarger than the mmap threshold, so it is allocated from a separate mapping rather than from the main heap. This would give us the layout we want, but at the cost of dramatically increasing memory usage during heap spraying. - Prepare a separate region whose sole purpose is to absorb

.name-sized allocations. Later, when we start building the main contiguous region, malloc will satisfy.nameallocations from that separate freelist instead of placing them next to our.valuechunks.

Separating .name allocations

We chose the second option. However, it is not as simple as issuing v9fs_xattrcreate for N .name-sized allocations and then freeing them.

At this point, we already know that v9fs_xattrcreate always allocates two chunks: one for .name and one for .value. If we simply call it with .value sized the same as .name, we get a layout like this:

0x20 0x20 0x20 0x20 0x20

+----------+----------+----------+----------+----------+

| | | | | |

| .name A | .value A | .name B | .value B | .name C |

| | | | | |

+----------+----------+----------+----------+----------+

With that heap state, issuing a P9_TCLUNK request would first free .name A and then .value A. When .value A is freed, the allocator sees that the preceding chunk .name A is already free and immediately consolidates the two. As a result, instead of ending up with many reusable .name-sized chunks in the freelist, we would just create a large consolidated free chunk, which is not what we want.

To avoid that, we take advantage of the fact that chunks freed into tcache are not consolidated. It is also important to note that tcache maintains a separate freelist for each size class within the tcache range, and in this glibc version each such freelist can hold up to 16 entries.

We begin by draining the tcache freelist for every relevant size class by allocating 16 chunks of each size. Throughout this process, the .name allocation remains fixed at size 0x20. We first allocate 16 xattrs whose .value size is 0x30. After that, we allocate another 16 xattrs, this time with .value size 0x40, and continue in the same way for each tcache size class.

This yields the following layout:

0x20 0x30 0x20 0x30

+---------+--------------+---------+--------------+- - - - -

| | | | |

| .name A | .value A | .name B | .value B | . . .

| | | | |

+---------+--------------+---------+--------------+- - - - -

0x20 0x40 0x20 0x40

+---------+------------------+---------+------------------+- - - - -

| | | | |

| .name C | .value C | .name D | .value D | . . .

| | | | |

+---------+------------------+---------+------------------+- - - - -

At this point, we can free all allocations created during this phase. Because we emptied every tcache freelist, the first 16 .name chunks end up in the 0x20 tcache bin, along with the interleaved .value chunks of size 0x30. The next 16 .name chunks are interleaved with .value chunks of size 0x40; when freed, those .value chunks also go into their corresponding tcache bin instead of consolidating with the adjacent free .name chunks. Repeating this across all tcache sizes leaves us with a large region of free .name-sized chunks that will later be served to the .name allocations of the main contiguous spray - leaving us with the desired layout of adjacent .value chunks.

Corrupting the Size

The input format is guest-controlled, and we choose u8 (unsigned 8-bit PCM). As noted earlier, silence in u8 is centered at 0x80 (rather than 0x00 in s8), which biases this uncontrolled overflow toward larger byte values and increases the chance that the corrupted size grows.

As we already concluded, AUD_read is called with the amount:

MIN(available, (stream->params.period_bytes - buffer->size))

And as mentioned earlier, stream->params.period_bytes is fully guest-controlled, so we can set it such that the overflow reaches exactly far enough to overwrite only the lowest byte of the next chunk's size field.

With the desired heap layout of repeated 0x200-sized .value chunks in place, we can then free every other one:

Free Free

+----------+----------+----------+----------+----------+

| |..........| |..........| |

| .value A |..........| .value C |..........| .value E |

| |..........| |..........| |

+----------+----------+----------+----------+----------+

We then allocate the overflowing virtio-snd buffer into one of those holes, start the stream, and let it overflow into the size field of the .value chunk directly next to it:

+----------+

| | Free

+----------| buffer |----------+----------+----------+

| | | |..........| |

| .value A +----------+ .value C |..........| .value E |

| | | |..........| |

+----------+ +----------+----------+----------+

After the overflow, the virtio-snd buffer is freed by QEMU. We then refill all of the holes created for the virtio-snd buffer by allocating new 0x200-sized chunks in their place. At that point, we are left with a layout similar to the original one, except that one .value chunk now has a corrupted and likely oversized size field:

Oversized chunk

|

+------+------+

| |

v v

+----------+----------+----------+----------+----------+

| | | | | |

| .value A | .value X | .value C | .value Y | .value E |

| | | | | |

+----------+----------+----------+----------+----------+

At this point, we can free the chunks left over from the initial contiguous spray. Because one chunk now has a corrupted, larger size field, freeing it causes a single oversized chunk to be inserted into one of the tcache bins in the range [0x210, 0x2f0]:

Free

0x210-0x2f0

|

+------+------+

Free | | Free

0x200 v v 0x200

+----------+----------+----------+----------+----------+

|..........| |..........| |..........|

|..........| .value X |..........| .value Y |..........|

|..........| |..........| |..........|

+----------+----------+----------+----------+----------+

We then once again fill the remaining holes and recover the oversized chunk by simply allocating every size in the possible range ([0x210, 0x2f0]).

.value B

+-------------+

| |

v v

+----------+----------+----------+--+-------+----------+

| | | |//| | |

| .value A | .value X | |//| | .value C |

| | | |//| | |

+----------+----------+----------+--+-------+----------+

^ ^

| |

+----------+

.value Y

After reclaiming it, we use that chunk to overwrite the size of the next chunk again, but this time we set it to 0x400 - this gives us a chunk that fully overlaps the chunk next to it, leaving us in the following final state:

.value Y extended

|

+----------+----------+

| |

v v

+----------+----------+----------+----------+----------+

| | | | | |

| .value A | .value X | .value B | .value Y | .value C |

| | | | | |

+----------+----------+----------+----------+----------+

Leaking a Heap Address

We begin by leaking a heap address, since that is the simplest target at this stage. More specifically, we want the address of a heap chunk whose contents we control. Once we have that, we gain a region of memory at a known address with controlled contents, which is useful for placing fake objects or reclaiming the same location with other objects and later inspecting them with an arbitrary read primitive.

To do this, we abuse the forward (fd) pointers used by tcache freelists. Modern glibc protects these pointers with a mitigation known as safe-linking. Instead of storing the next free chunk pointer directly, glibc encodes it by XORing it with the address of the current chunk, shifted right by 12:

fd = next ^ (curr >> 12)

When a tcache bin is empty and a single chunk is inserted into it, next is NULL because there is no following entry. In that case, the encoding becomes:

fd = 0 ^ (curr >> 12)

So if we free a single chunk into an empty tcache bin, its fd field is effectively just the chunk address shifted right by 12.

In the overlap we achieved earlier:

.value Y extended

|

+--------------------+--------------------+

| |

| |

v v

+--------------------+--------------------+

| | |

| .value Y | .value C |

| | |

+--------------------+--------------------+

We first free .value C into tcache and read its contents through the oversized .value Y. This gives us .value C >> 12. That is not yet the exact address of .value C, since the lower 12 bits are lost.

To recover the exact address of a controlled heap chunk, we reclaim .value C, then free a different controlled chunk into the same tcache bin. After that, we free .value C again. This time, next is no longer NULL, but instead points to that controlled chunk, so the encoded forward pointer becomes:

fd = next ^ (curr >> 12)

Since we already know curr >> 12 from the first leak, we can read the new fd value from .value C and recover the exact address of next by reversing the XOR:

next = fd ^ (curr >> 12)

This gives us the exact address of a heap chunk whose contents we control.

Arbitrary Read and Write

Having a controlled chunk at a known address lets us repurpose .value C into an arbitrary read/write primitive. To do that, we go back to the 9P device.

Recall v9fs_xattrcreate:

static void coroutine_fn v9fs_xattrcreate(void *opaque)

{

uint64_t size;

V9fsFidState *file_fidp;

V9fsFidState *xattr_fidp;

[...]

file_fidp = get_fid(pdu, fid);

[...]

/* Make the file fid point to xattr */

xattr_fidp = file_fidp;

xattr_fidp->fs.xattr.len = size;

xattr_fidp->fs.xattr.value = g_malloc0(size);

[...]

The important detail here is that an xattr FID stores both the backing pointer and its length inside the surrounding V9fsFidState object. In other words, if we can place a V9fsFidState where .value C currently sits, the overlapping .value Y chunk can overwrite V9fsFidState.fs.xattr.value and V9fsFidState.fs.xattr.len. That would immediately give us arbitrary read and write through P9_TREAD and P9_TWRITE.

At this point, .value C is a 0x200 chunk, while V9fsFidState falls into the 0x120 size class. Before freeing .value C, we therefore use the oversized .value Y chunk to change its size to match V9fsFidState. Once .value C is freed, it is inserted into the 0x120 tcache bin.

.value Y extended

|

+--------------------+--------------------+

| |

| Free |

v 0x120 v

+--------------------+---------------+----+

| |...............| |

| .value Y |...............| |

| |...............| |

+--------------------+---------------+----+

After that, we can simply allocate a new V9fsFidState with a P9_TWALK request and a fresh FID - this reaches alloc_fid, which allocates a new V9fsFidState:

static void coroutine_fn v9fs_walk(void *opaque)

{

V9fsFidState *fidp;

V9fsFidState *newfidp = NULL;

[...]

if (fid == newfid) {

[...]

} else {

newfidp = alloc_fid(s, newfid);

if (newfidp == NULL) {

err = -EINVAL;

goto out;

}

newfidp->uid = fidp->uid;

v9fs_path_copy(&newfidp->path, &path);

}

[...]

}

static V9fsFidState *alloc_fid(V9fsState *s, int32_t fid)

{

V9fsFidState *f;

f = g_hash_table_lookup(s->fids, GINT_TO_POINTER(fid));

if (f) {

/* If fid is already there return NULL */

BUG_ON(f->clunked);

return NULL;

}

f = g_new0(V9fsFidState, 1);

[...]

After it is allocated, it will be placed into that freed region in place of the old .value C chunk.

.value Y extended

|

+--------------------+--------------------+

| |

| |

v v

+--------------------+---------------+----+

| | |....|

| .value Y | V9fsFidState |....|

| | |....|

+--------------------+---------------+----+

Leaking a QEMU Address

We now have an arbitrary read/write primitive and a controlled chunk at a known address. The next step is to leak a QEMU code address so we can later redirect execution. To do this, we combine the arbitrary read primitive with the known-address chunk: we free that chunk, replace it with an object that contains pointers into QEMU's code or data, and then use arbitrary read to leak its fields.

For this, we go back to virtio-snd and its buffer allocations. Recall virtio_snd_handle_rx_xfer:

static void virtio_snd_handle_rx_xfer(VirtIODevice *vdev, VirtQueue *vq)

{

VirtIOSound *vsnd = VIRTIO_SND(vdev);

VirtIOSoundPCMBuffer *buffer;

VirtQueueElement *elem;

size_t msg_sz, size;

uint32_t stream_id;

[...]

for (;;) {

VirtIOSoundPCMStream *stream;

elem = virtqueue_pop(vq, sizeof(VirtQueueElement));

if (!elem) {

break;

}

/* get the message hdr object */

msg_sz = iov_to_buf(elem->out_sg,

elem->out_num,

0,

&hdr,

sizeof(virtio_snd_pcm_xfer));

if (msg_sz != sizeof(virtio_snd_pcm_xfer)) {

goto rx_err;

}

stream_id = le32_to_cpu(hdr.stream_id);

[...]

WITH_QEMU_LOCK_GUARD(&stream->queue_mutex) {

size = iov_size(elem->in_sg, elem->in_num) -

sizeof(virtio_snd_pcm_status);

buffer = g_malloc0(sizeof(VirtIOSoundPCMBuffer) + size); // [1]

buffer->elem = elem;

buffer->vq = vq; // [2]

buffer->size = 0;

buffer->offset = 0;

QSIMPLEQ_INSERT_TAIL(&stream->queue, buffer, entry);

}

At [1], QEMU allocates a VirtIOSoundPCMBuffer whose size depends on the guest-provided iovec, and at [2] it stores the VirtQueue *vq pointer into the buffer.

This VirtQueue structure contains some useful fields:

struct VirtQueue

{

[...]

VirtIOHandleOutput handle_output;

VirtIODevice *vdev;

[...]

};

The .handle_output field is a callback, specifically a function pointer that gets called when the virtqueue receives a notification from the guest, and .vdev is the pointer passed to it as the first argument:

static void virtio_queue_notify_vq(VirtQueue *vq)

{

if (vq->vring.desc && vq->handle_output) {

VirtIODevice *vdev = vq->vdev;

[...]

vq->handle_output(vdev, vq);

[...]

}

}

This means that if we free the known-address chunk and replace it with a VirtIOSoundPCMBuffer - which is straightforward, since we control the buffer allocation size through the in_sg iovec - we can use the arbitrary read primitive to read its .vq pointer, then follow that pointer to leak .handle_output from the VirtQueue structure. In our case, that field points to virtio_snd_handle_rx_xfer, which gives us QEMU's base address.

From there, we can use the arbitrary read primitive once more to read a resolved entry from QEMU's GOT, leaking a libc address. With that, we can compute the address of system.

RIP Control

At this point, we have everything we need: an arbitrary read/write primitive, a QEMU code leak, and the address of system. To hijack control flow, we do not need to look far - we just described a function pointer on the heap at a known address: VirtQueue.handle_output.

We overwrite .handle_output with the address of system and write the command string we want to execute into memory using our arbitrary write. Then we overwrite .vdev with the address of that command string, so it is passed as the first argument.

Then, we simply notify the virtqueue from the guest. QEMU enters virtio_queue_notify_vq, which calls vq->handle_output(vq->vdev) - or, after our overwrites, system(command).

Finally, with all of this, we achieve a reliable guest-to-host escape and execute gnome-calculator on the host system:

We recently achieved guest-to-host escape by exploiting a QEMU 0day.

— OtterSec (@osec_io) March 5, 2026

We’ll share details on a new technique leveraging the latest glibc allocator behavior and what we believe is a novel QEMU-specific heap spray/RIP-control primitive.

Writeup coming next week.

The final exploit, targeting QEMU commit ece408818d27f745ef1b05fb3cc99a1e7a5bf580 (Feb 13, 2026) and the latest glibc 2.43, can be found here.

Special thanks to William Liu for proofreading this post and helping us polish it before publication.

Conclusion

Starting from a heap overflow where the written bytes are effectively random, we showed how careful heap grooming and a favorable change in glibc 2.43's allocator can turn even a single byte of uncontrolled corruption into a reliable guest-to-host escape.

More broadly, this exploit is a reminder that weak-looking primitives should not be dismissed too quickly - with the right heap layout and target, even highly constrained corruption can be enough.